|

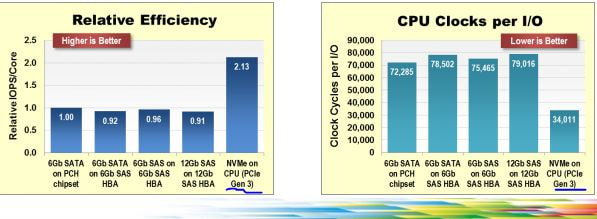

SSD made it on the data scene more than a decade ago, and was, e.g. used in one of my (then) organization's database storage tier for MySQL. But the promise of SSD was not really realized as the access mechanisms for SSD were still bottle-necked by traditional storage protocol stacks, controllers, etc. There was plenty of acknowledgement of the need for the software mechanisms to change to go along with that theme. With recent bump is the RAW IOPS, Sequential throughput, and lowering of latency; it seems that there would be little more excitement left on that front(i.e. hardware) especially with latency getting to around 10 microseconds and a lot more of protocol stack work getting done. To me the more exciting frontier is being met by NVM express protocol design which shows how with lower CPU utilization more data could be consumed (following is used as is from nvmexpress documents. The next wave of nvmexpress over fabrics would make its case for scaling out storage. With this in mind and a demo rig build, starting the exploration into figuring out the optimal for data transformation, storage and analytics. Watching out for pioneering vendors e.g. Tegile or PureStorage providing SSD hardware which is utilizing these optimizations. Strange isn't it? that, it should take more than a decade to get to doing the things done right way? Its a rhetorical question, (strange part), of course. But, with vision and support it could have been done from get go, so what do you think went missing?

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

About Sarbjit ParmarA practitioner with technical and business knowledge in areas of Data Management( Online transaction processing, data modeling(relational, hierarchical, dimensional, etc.), S/M/L/XL/XXL & XML data, application design, batch processing, analytics(reporting + some statistical analysis), MBA+DBA), Project Management / Product/Software Development Life Cycle Management. Archives

March 2018

Categories

All

|

RSS Feed

RSS Feed